/

February 27, 2023

/

#

Min Read

Implementing A/B Testing in Automotive

Software Updates and Their Pitfalls

Software updates do not only introduce new features or bug fixes but have the potential to create problems as well. At a high level, the issues reach from compatibility problems to data loss and security vulnerabilities to reduced performance and simple user dissatisfaction. Often, those issues cannot be fully detected in the testing phase before the release. The root cause of such deficiencies has to be properly identified and subsequently debugged. However, the first step is to find out how a new version performs compared to the prior version or possibly a competing new version.

Ideally, such tests run under realistic conditions and involve no risks to software, environment, and users. These two preconditions aren’t easily reconciled, though. An important method and well established process to use here is A/B testing.

A/B Tests - Advantages and Preconditions

An A/B test is a controlled experiment that involves two variants (hence, A and B) whose metrics are compared. Clinical trials are the most prominent example and it could even be said that A/B testing has established the practice of clinical studies. Another field that lends itself to such testing is user experience and research because it is fairly easy to show discrete groups of users different interfaces and then compare how the users interact with them.

For A/B tests to provide a valid, quantified comparison between two variants and thus meaningful results, certain conditions must be met. The sample has to be large enough to achieve statistical significance and the statistical units have to show sufficient similarity without losing discreteness. Additionally, proper parameters for comparison have to be identified. Sometimes it is argued that beyond 2 parameters the statistics get overly complicated. However, this is an aspect that can be mastered and persists as a problem only for organizations not rooted in science or engineering.

A/B Testing in Tech and Software

Let’s turn to the question of using A/B testing in the area of tech and software. This type of test is commonly used by tech companies to test new features on a small percentage (for example 1%) of the target audience and to collect core metrics from both treatment and control groups. The core metrics are defined by data scientists and such a test provides reliable information regarding the impact of the feature without exposing the broader audience to risk. For example, if the feature turn out to have a security or financial deficiency.

A/B testing can also be used in software releases, for example, for Android/iOS apps. But today only a small group of companies has the option to take advantage of this important tool. Developers of Android apps, even in big companies, have no ability to A/B test their mobile apps. The A/B testing of mobile apps in most tech companies takes place on the server side by heavily investing in server-driven development, a trend that is actually caused by a lack of support for A/B tests, not by preference.

A/B Testing in Automotive

In the field of automotive software, the task to implement an A/B test seems daunting. First, because it requires the calculated, planned deployment of different software versions to a small fraction of a fleet consisting of hundreds of thousands of vehicles. Second, it comes with serious safety and security considerations. Third, it involves the risk of software regression, which means that features work slower or not at all or use more memory or resources after the update. Software regression, moreover, may also be triggered by changes to the environment in which the software is running, such as system upgrades, system patching, or a change as simple as the switch to daylight saving time.

This last aspect brings up the question of why verifications on testing devices are not enough. The factor here is that software works on various runtime devices. It is not practically achievable to test on all specified combinations of all enumerated devices under all dynamic conditions. In automotive, in particular, there simply are too many. Road and weather conditions, for instance, can never be comprehensively simulated by internal testing. Another example here would be an app for a ride sharing service during the holiday season.

The use of production vehicles to collect metrics feedback helps software developers cover more areas and reach more confidence. The resulting consideration is how to implement A/B testing in a safe and secure way in automotive, and with a reasonable degree of effort. Sibros offers options for A/B testing that are designed with automotive use cases in mind:

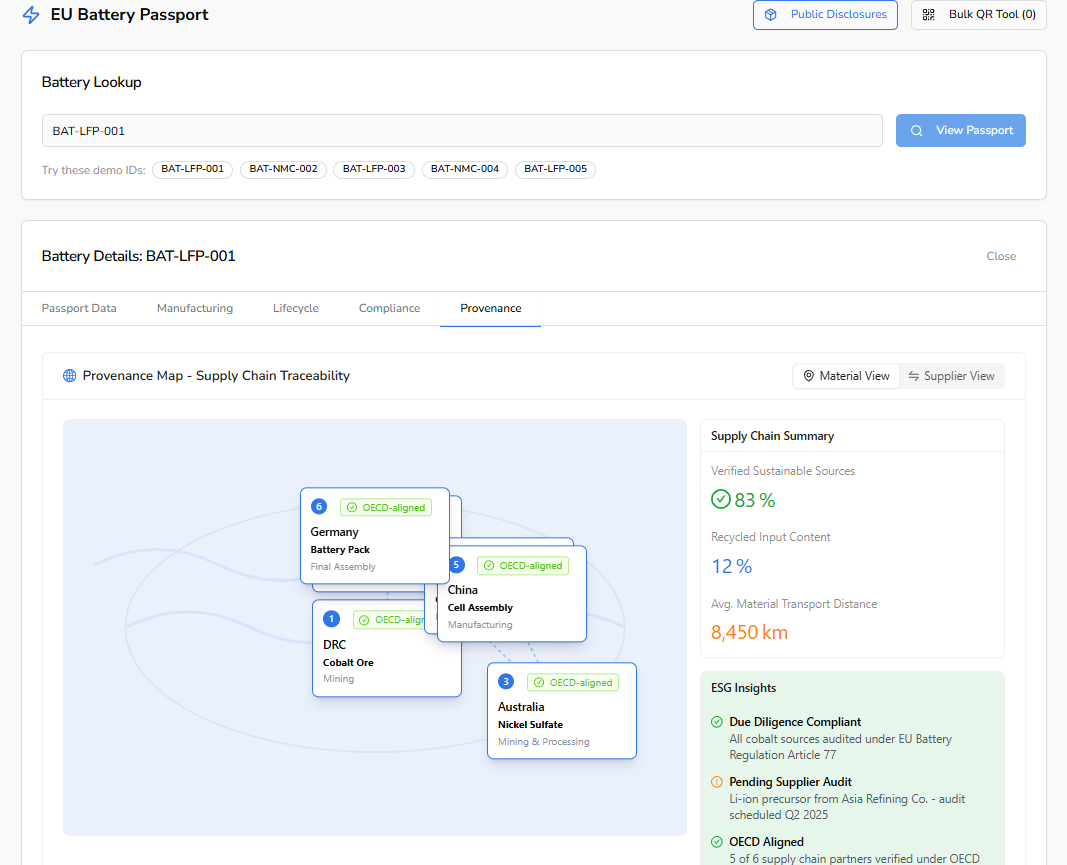

- Canary release - With Deep Updater, a multi-stage rollout to any percentage of targeted vehicles can be deployed, using vehicle architecture modeling. Sibros provides a flexible targeting mechanism to ship software to any device. The targeting can be based on a rich set of both static dimensions such as vehicle attributes and dynamic dimensions such as installed firmware version or latest location.

- Core metrics collection - With Deep Logger, core metrics from the component receiving the update (such as a battery control unit) can be streamed in real time, using complex conditional logging. Sibros provides a power data analytics dashboard to help customers easily identify regressions as well as improvements on specified metrics. For example, it can now be determined if the battery is consuming more or less power than its older version and it is possible to suggest if any particular model is affected most, using production data. It should be added that Deep Logger is not only agnostic to in-vehicle hardware, but also does not depend on any external logging hardware.

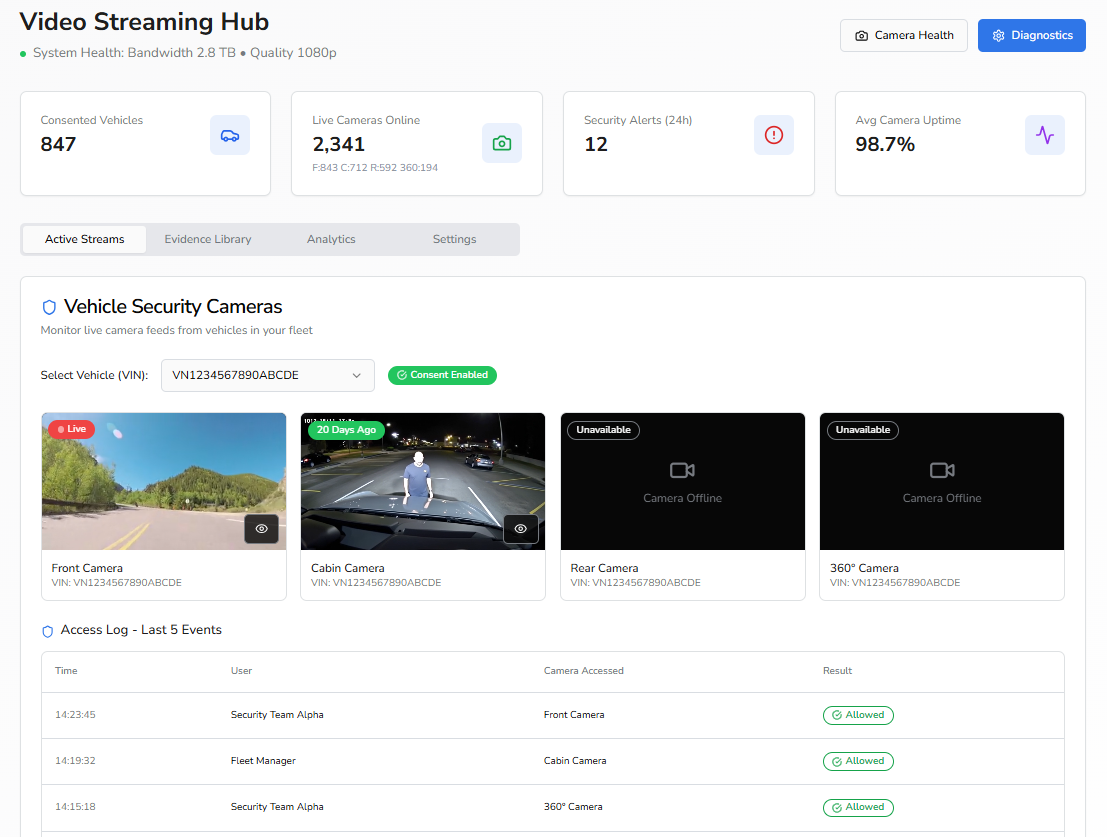

- Regression debugging - If there is a regression detected on a new firmware release, Command Manager can be used to issue specific command sequences to the sampled treatment devices to collect granular details of the vehicle and root cause the regression.

Beyond Software A/B Testing

In addition to offering these tools for proper A/B testing, Sibros also supports remote feature experiments. On top of projects that involve the calibration of parameters, Sibros’ stack allows customers to experiment with a feature by toggling on/off certain flags in their firmware without updating the firmware itself.

For example, an image that is shipped to all devices can support an advanced battery algorithm, but by default it is turned off. With Sibros’ feature A/B testing, customers can compare how the algorithm behaves in production. The algorithm can be switched on via a remote command to specific target audience devices, which allows them to conduct feature experimentation in remote device A/B testing.

The Sibros Offer

The use of Sibros’ Deep Connected Platform (DCP) thus offers multiple advantages when conducting A/B tests. Moreover, DCP provides a 360° device data view over the full life cycle with a vertically integrated, all-in-one, end-to-end connectivity system for software updates, diagnostics, and logging with network stacks and bootloaders. DCP runs with minimal CPU and memory load and works on all major cloud providers, such as GCP, AWS, and Azure with horizontal scalability, portability, and abstraction.

New Opportunities

For OEMs and tier 1 suppliers, development, updates, and patches of software and firmware are rapidly turning into core tasks. Possessing the proper tools for A/B tests is crucial because A/B testing only helps when it is readily available and can be conducted with reasonable effort, adherence to safety and security norms, and on demand scalability. Using Sibros’ DCP allows OEMs to deploy A/B testing on fleet fractions of any size. It is scalable and the results can be logged in real time. Moreover, with specific commands, the process can be further controlled along with deeper drill-downs. If you want to know more about the options afforded by Sibros’ DCP, talk to us.